The Lean Programming Language and Theorem Prover

Richard Kelley

(Based on slides by Leonardo de Moura)

Testing vs. Formal Verification

-

Testing: "This program works on these inputs."

-

Formal verification: "This program works on all inputs."

-

Testing can only show the presence of bugs, not their absence.

-

Formal verification can give a mathematical guarantee of correctness.

-

Why have we only recently been able to do formal verification for real-world software?

-

Previously, the cost of writing and checking proofs was too high.

-

Recent advances in proof assistants and AI have made it more feasible.

-

Last Month, Something Unexpected Happened

A general-purpose AI, with no special training for theorem proving, converted zlib (a C compression library) to Lean, passed the test suite, and then proved that the code is correct.

Not tested. Proved. For every possible input. lean-zip

"This was not expected to be possible yet."

How?

What is Lean?

-

A programming language and a proof assistant

-

Same language for code and proofs. No translation layer.

-

Small trusted kernel. Proofs can be exported and independently checked.

-

Lean is implemented in Lean. Very extensible.

-

The math community made Lean their own: >50,000 lines of extensions.

def reverse : List α → List α

| [] => []

| x :: xs => reverse xs ++ [x]

theorem reverse_append (xs ys : List α)

: reverse (xs ++ ys) = reverse ys ++ reverse xs := byα:Type u_1xs:List αys:List α⊢ reverse (xs ++ ys) = reverse ys ++ reverse xs

induction xs with

| nil =>α:Type u_1ys:List α⊢ reverse ([] ++ ys) = reverse ys ++ reverse [] simp [reverse]All goals completed! 🐙

| cons x xs ih =>α:Type u_1ys:List αx:αxs:List αih:reverse (xs ++ ys) = reverse ys ++ reverse xs⊢ reverse (x :: xs ++ ys) = reverse ys ++ reverse (x :: xs) simp [reverse, ih]All goals completed! 🐙

#eval[3, 2, 1] reverse [1, 2, 3]

[3, 2, 1]

Success Stories

Several in mathematics, but I want to focus on software verification.

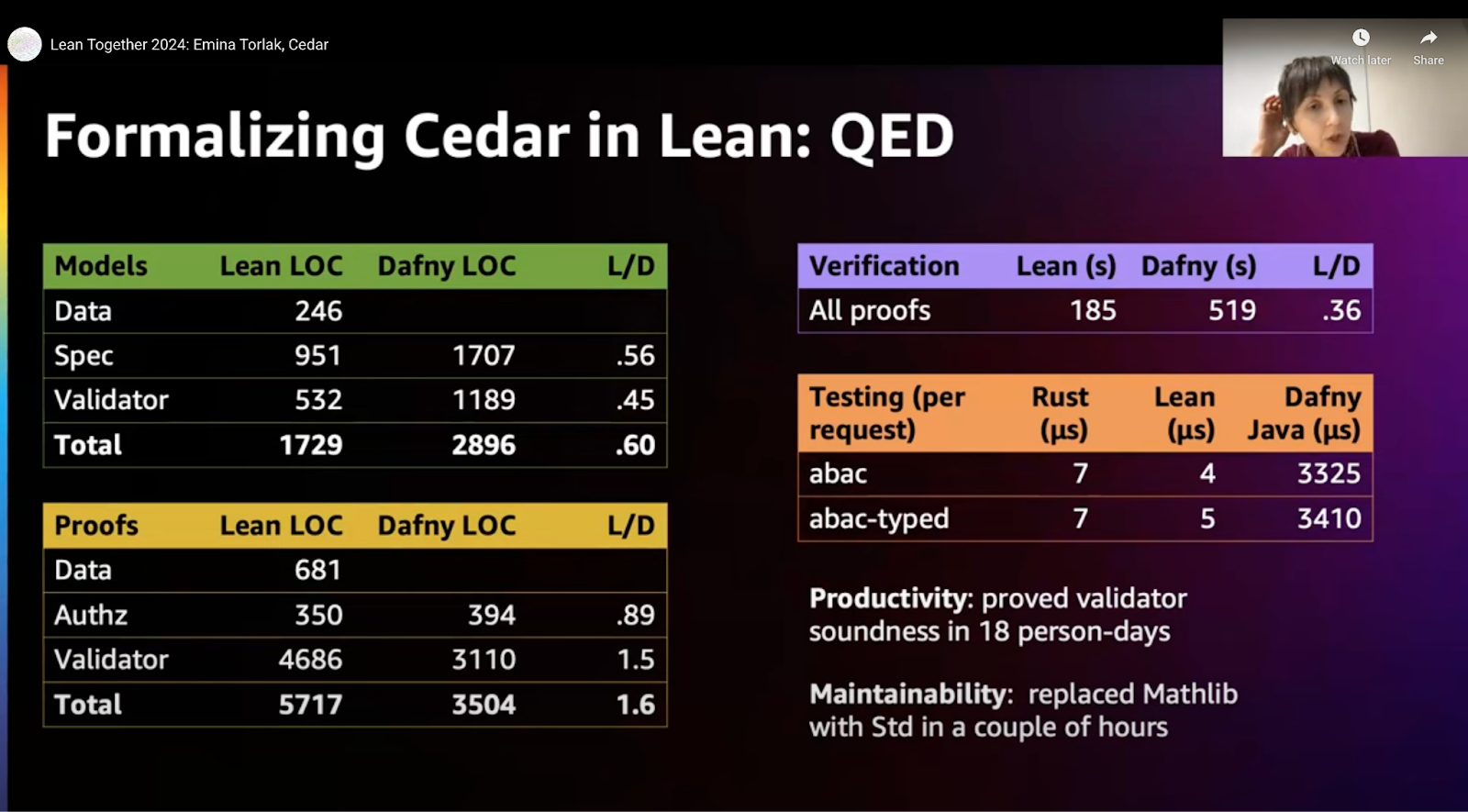

Cedar (AWS)

-

Authorization policy engine. Verified in Lean.

-

No version ships unless proofs pass.

"We've found Lean to be a great tool for verified software development." — Emina Torlak

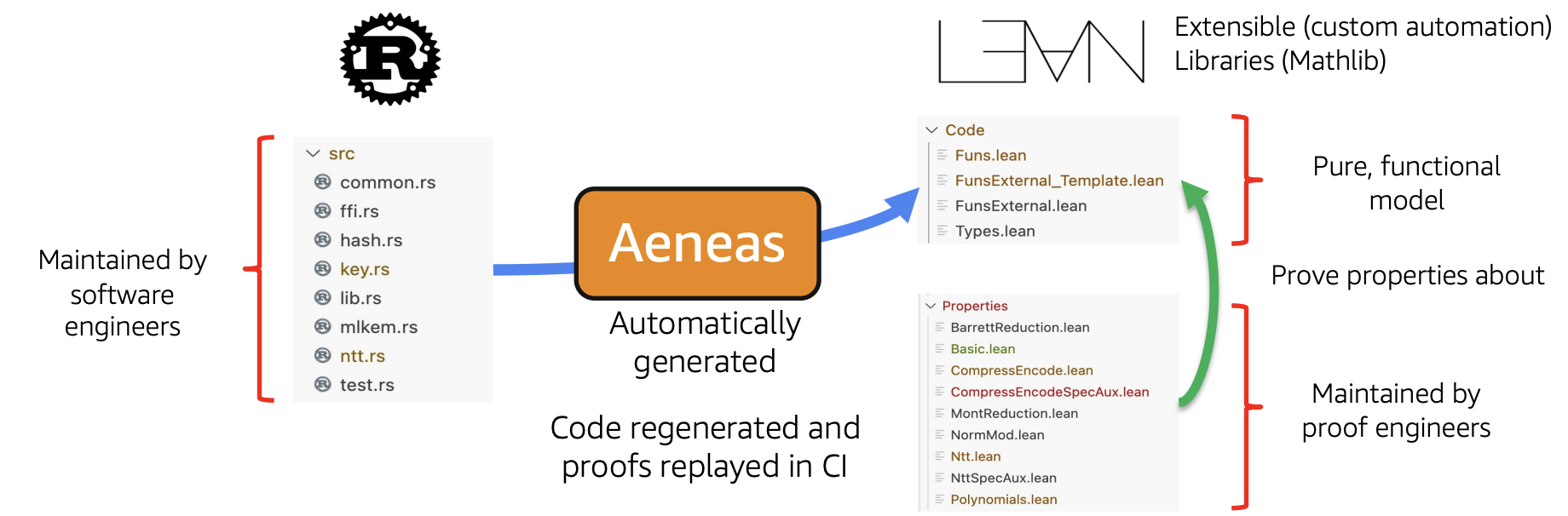

SymCrypt (Microsoft)

-

Core cryptographic library. Verified using Aeneas + Lean.

-

They verify the Rust code as written by software engineers.

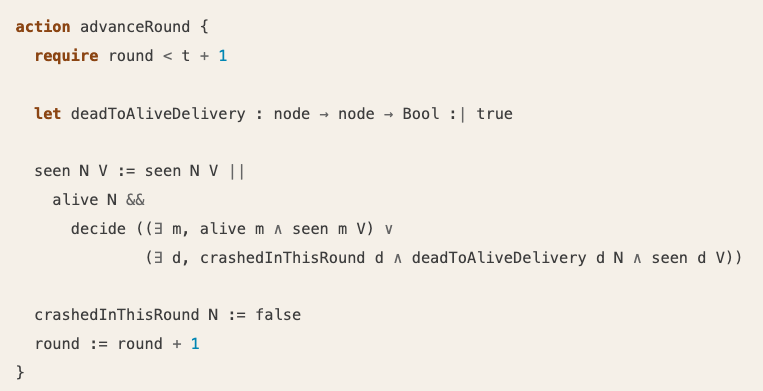

Veil: Verified Distributed Protocols

-

Combines model checking with full formal proof.

-

Found an inconsistency in a prior formal verification of Rabia consensus that went undetected across two separate tools.

Some Simple Lean

Lean is a full programming language, so you can write code like this:

def x : Nat := 5

def y : Nat := 4

def sum : Nat := x + y

#eval9 sum

9

More Simple Lean

-

We can also work with Strings, Lists, and more complex data structures.

#eval"it is no" String.append "it is " (if 1 > 2 then "yes" else "no")

"it is no"#eval[1, 4, 9] [1, 2, 3].map (fun x => x * x)

[1, 4, 9]

We can define functions

def factorial : Nat → Nat

| 0 => 1

| n + 1 => (n + 1) * factorial n

#eval120 factorial 5

120

-

This is recursive function definition.

-

This is also an example of pattern matching: the function behaves differently on

0and onn + 1. -

This is equivalent to the more familiar definition using

if, but often more concise and easier to read.

Function definitions can name parameters

def addThree (n : Nat) : Nat := n + 3

#eval10 addThree 7

10

-

(n : Nat)says thataddThreetakes a natural number parameter namedn. -

addThree 7passes7as the value of that parameter. -

Lean function calls use spaces, so we write

addThree 7, notaddThree(7).

We can also define structures for more complex data

structure Person where

name : String

age : Nat

def richard : Person := { name := "Richard", age := 42 }

#evaltrue richard.age > 30

true

BUT we can also write proofs!

theorem addition_commutes (a b : Nat) : a + b = b + a := bya:Natb:Nat⊢ a + b = b + a

induction a with

| zero =>b:Nat⊢ 0 + b = b + 0 simpAll goals completed! 🐙

| succ a ih =>b:Nata:Natih:a + b = b + a⊢ a + 1 + b = b + (a + 1) grindAll goals completed! 🐙

#checkaddition_commutes (a b : Nat) : a + b = b + a addition_commutes

addition_commutes (a b : Nat) : a + b = b + a

How does this work?

Why does it look like addition_commutes is a function with a weird type?

Dependent Types

-

In most programming languages, values and types live in separate worlds.

-

In Lean, types can depend on values.

-

That means a type can say more than "this is a list"; it can say "this is a list of length

n." -

It's not obvious at first, but giving types the ability to depend on values opens the door to writing proofs directly into our code.

Propositions as Types

-

In Lean, propositions are just types.

-

A proof of a proposition is just a value of that type.

-

This is called the "propositions as types" principle, and it's a powerful way to connect programming and proving.

-

Also known as the Curry-Howard correspondence: for every proposition, there is a corresponding type, and for every proof of that proposition, there is a corresponding value of that type.

-

Propositions as Types

def odd (n : Nat) : Prop := ∃ k, n = 2 * k + 1

-

The type of

odd 3isProp, a statement that can be true or false. -

For any n,

odd nis the assertion that the numberncan be written as2 * k + 1for somek- precisely the definition of an odd number. -

We can't evaluate it:

oddreturns aProp, not aBool. -

But we can prove it by providing a witness.

example : odd 3 := ⟨1, by⊢ 3 = 2 * 1 + 1 decideAll goals completed! 🐙⟩

Theorem Proving Is a Game

"You have written my favorite computer game" — Kevin Buzzard

The "game board": you see goals and hypotheses, then apply "moves" (tactics).

theorem square_of_odd_is_odd : odd n → odd (n * n) := byn:Nat⊢ odd n → odd (n * n)

intro ⟨k₁, e₁⟩n:Natk₁:Nate₁:n = 2 * k₁ + 1⊢ odd (n * n)

simp [e₁, odd]n:Natk₁:Nate₁:n = 2 * k₁ + 1⊢ ∃ k, (2 * k₁ + 1) * (2 * k₁ + 1) = 2 * k + 1

exists 2 * k₁ * k₁ + 2 * k₁n:Natk₁:Nate₁:n = 2 * k₁ + 1⊢ (2 * k₁ + 1) * (2 * k₁ + 1) = 2 * (2 * k₁ * k₁ + 2 * k₁) + 1

grindAll goals completed! 🐙

Each tactic transforms the goal until nothing remains. The kernel checks the final proof term.

The Kernel Catches Mistakes

example : odd 3 := ⟨2, by⊢ 3 = 2 * 2 + 1 decide⊢ 3 = 2 * 2 + 1Tactic `decide` proved that the proposition

3 = 2 * 2 + 1

is false⟩

You don't need to trust me, or my automation. You only need to trust the small kernel.

An incorrect proof is rejected immediately.

Lean has multiple independent kernels.

You can build your own and submit it to arena.lean-lang.org.

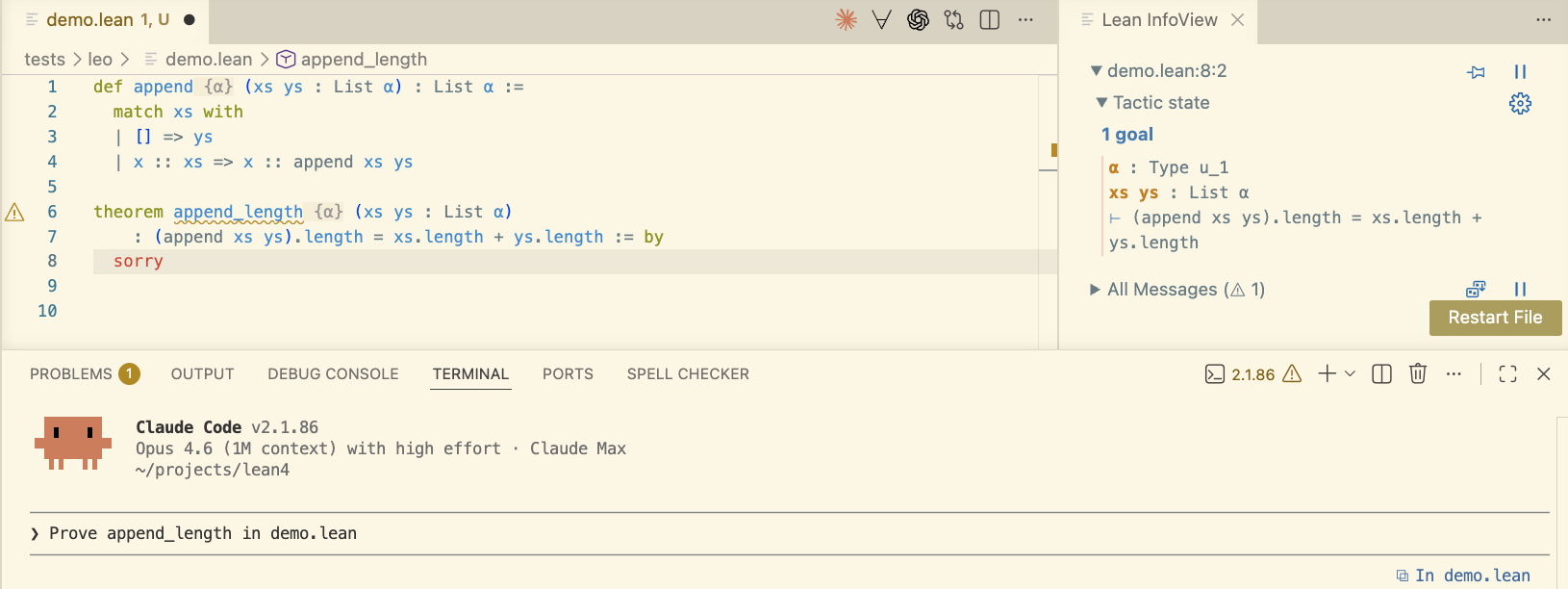

How do we write Lean?

-

It's a programming language, so you can use any text editor.

-

Most people use VS Code, because of an extension that gives real-time feedback.

VS Code Extension

-

Real-time feedback: errors, goals, and hints as you type.

-

Goal state panel: see what you need to prove at each tactic step.

-

Hover information: types, docstrings, source locations.

-

Go to definition. Find references. Code actions.

-

200,000+ installs.

The IDE is where you collaborate with AI now.

Examples

Types and Pattern Matching

inductive Tree (α : Type u) where

| leaf

| node (left : Tree α) (value : α) (right : Tree α)

deriving Inhabited, Repr

def Tree.map (f : α → β) : Tree α → Tree β

| .leaf => .leaf

| .node left value right =>

.node (map f left) (f value) (map f right)

#evalTree.node (Tree.leaf) 6 (Tree.node (Tree.leaf) 10 (Tree.leaf)) Tree.map (2*·) (.node .leaf 3 (.node .leaf 5 .leaf))

Tree.node (Tree.leaf) 6 (Tree.node (Tree.leaf) 10 (Tree.leaf))

Theorems

Three proofs of the same theorem.

theorem Tree.map_id₁ (tree : Tree α) : tree.map id = tree := byα:Type u_1tree:Tree α⊢ map id tree = tree

induction tree with

| leaf =>α:Type u_1⊢ map id leaf = leaf rflAll goals completed! 🐙

| node l v r ih_l ih_r =>α:Type u_1l:Tree αv:αr:Tree αih_l:map id l = lih_r:map id r = r⊢ map id (l.node v r) = l.node v r simp [map, *]All goals completed! 🐙

theorem Tree.map_id₂ (tree : Tree α) : tree.map id = tree := byα:Type u_1tree:Tree α⊢ map id tree = tree

fun_induction Tree.mapα:Type u_1⊢ leaf = leafα:Type u_1a✝²:Tree αa✝¹:αa✝:Tree αih2✝:map id a✝² = a✝²ih1✝:map id a✝ = a✝⊢ (map id a✝²).node (id a✝¹) (map id a✝) = a✝².node a✝¹ a✝ <;>α:Type u_1⊢ leaf = leafα:Type u_1a✝²:Tree αa✝¹:αa✝:Tree αih2✝:map id a✝² = a✝²ih1✝:map id a✝ = a✝⊢ (map id a✝²).node (id a✝¹) (map id a✝) = a✝².node a✝¹ a✝ simp [*]All goals completed! 🐙

theorem Tree.map_id₃ (tree : Tree α) : tree.map id = tree := byα:Type u_1tree:Tree α⊢ map id tree = tree

try?All goals completed! 🐙Try these:

[apply] (fun_induction map) <;> grind

[apply] (fun_induction map) <;> simp_all

[apply] (fun_induction map) <;> simp [*]

[apply] (fun_induction map) <;> simp only [id_eq, *]

[apply] (fun_induction map) <;> grind only [= id.eq_1]

Theorems

Three proofs of the same theorem.

theorem Tree.map_compose₁ (tree : Tree α) (f : α → β) (g : β → γ)

: (tree.map f).map g = tree.map (g ∘ f) := byα:Type u_1β:Type u_2γ:Type u_3tree:Tree αf:α → βg:β → γ⊢ map g (map f tree) = map (g ∘ f) tree

induction tree with

| leaf =>α:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γ⊢ map g (map f leaf) = map (g ∘ f) leaf rflAll goals completed! 🐙

| node l v r ih_l ih_r =>α:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γl:Tree αv:αr:Tree αih_l:map g (map f l) = map (g ∘ f) lih_r:map g (map f r) = map (g ∘ f) r⊢ map g (map f (l.node v r)) = map (g ∘ f) (l.node v r) simp [map, *]All goals completed! 🐙

theorem Tree.map_compose₂ (tree : Tree α) (f : α → β) (g : β → γ)

: (tree.map f).map g = tree.map (g ∘ f) := byα:Type u_1β:Type u_2γ:Type u_3tree:Tree αf:α → βg:β → γ⊢ map g (map f tree) = map (g ∘ f) tree

fun_induction Tree.map f treeα:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γ⊢ map g leaf = map (g ∘ f) leafα:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γa✝²:Tree αa✝¹:αa✝:Tree αih2✝:map g (map f a✝²) = map (g ∘ f) a✝²ih1✝:map g (map f a✝) = map (g ∘ f) a✝⊢ map g ((map f a✝²).node (f a✝¹) (map f a✝)) = map (g ∘ f) (a✝².node a✝¹ a✝) <;>α:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γ⊢ map g leaf = map (g ∘ f) leafα:Type u_1β:Type u_2γ:Type u_3f:α → βg:β → γa✝²:Tree αa✝¹:αa✝:Tree αih2✝:map g (map f a✝²) = map (g ∘ f) a✝²ih1✝:map g (map f a✝) = map (g ∘ f) a✝⊢ map g ((map f a✝²).node (f a✝¹) (map f a✝)) = map (g ∘ f) (a✝².node a✝¹ a✝) simp [map, *]All goals completed! 🐙

theorem Tree.map_compose₃ (tree : Tree α) (f : α → β) (g : β → γ)

: (tree.map f).map g = tree.map (g ∘ f) := byα:Type u_1β:Type u_2γ:Type u_3tree:Tree αf:α → βg:β → γ⊢ map g (map f tree) = map (g ∘ f) tree

try?All goals completed! 🐙Try these:

[apply] (fun_induction map f tree) <;> grind [= map]

[apply] (fun_induction map f tree) <;> grind only [map]

[apply] (fun_induction map f tree) <;> grind => instantiate only [map]

Structures

structure Point (α : Type u) where

x : α

y : α

inductive Color where

| red | green | blue

structure ColorPoint (α : Type u) extends Point α where

c : Color

def p : ColorPoint Nat :=

{ x := 10, y := 20, c := .red }

example : p.x = 10 := rfl

example : p.y = 20 := rfl

example : p.c = .red := rfl

#eval30 p.x + p.y

30

Option and do Notation

def find? (p : α → Bool) (xs : List α) : Option α := do

for x in xs do

if not (p x) then

continue

return x

failure

#evalsome 7 find? (· > 5) [1, 3, 5, 7, 10]

some 7

Example: A Rate Limiter

-

Tiny example, but it has the same shape as real verified systems.

-

Imagine an API service that wants to avoid being overwhelmed by bursts of traffic.

-

The service keeps a small bucket of tokens. Each granted request spends one token.

-

Over time, the bucket refills, but it never grows past a fixed capacity.

-

If a request arrives when no token is available, the limiter rejects it.

-

State: how many tokens are available.

-

Parameters: capacity and refill rate.

-

Input: either

.idleor.request. -

Step function: refill, maybe consume one token, return the new state and whether the request was granted.

Rate Limiter State

-- State of the rate limiter

structure RLState where

-- How many tokens are left in the bucket.

tokens : Nat

deriving Repr

-

structuredefines a record type. -

An

RLStatevalue has one field:tokens. -

Natmeans a natural number, so tokens can never be negative. -

deriving Reprasks Lean to generate a printable representation for debugging and examples.

Rate Limiter Parameters

-- Parameters

structure RLParams where

capacity : Nat

refill : Nat

deriving Repr

-

Parameters are values that configure the state machine.

-

capacityis the maximum number of tokens the limiter can hold. -

refillis how many tokens are added at each step. -

Both are

Nat, so the model rules out negative capacities and negative refill rates.

Rate Limiter Inputs

inductive RLInput

| idle

| request

deriving Repr

-

inductivedefines a type by listing its possible cases. -

RLInputhas exactly two possible values:.idleand.request. -

.idlemeans time passes and the bucket refills, but no request arrives. -

.requestmeans time passes, the bucket refills, and then the limiter tries to grant one request.

Rate Limiter Step Function

-- One step of the system

def step (p : RLParams) (s : RLState) : RLInput → RLState × Bool

| .idle =>

let tokens₁ := min p.capacity (s.tokens + p.refill)

({ tokens := tokens₁ }, false)

| .request =>

let tokens₁ := min p.capacity (s.tokens + p.refill)

if _h : 0 < tokens₁ then

({ tokens := tokens₁ - 1 }, true)

else

({ tokens := tokens₁ }, false)

-

stepis a pure state transition function. -

It takes parameters, the current state, and one input.

-

It returns the next state plus a

Bool: was the request granted? -

Both branches refill first, capped by

capacity. -

A request succeeds exactly when at least one token is available after refill.

Testing the Rate Limiter

-

Before proving a theorem, we can still write ordinary concrete tests.

-

In Lean, a test can be an

example: a small proposition that Lean must check. -

rflproves the equality by reducing both sides to the same value. -

If the implementation changes and this expected result is no longer true, this slide stops compiling.

-- A few concrete tests, written as examples that Lean checks.

section Tests

def testParams : RLParams :=

{ capacity := 3, refill := 1 }

example : step testParams { tokens := 0 } .idle = ({ tokens := 1 }, false) := by⊢ step testParams { tokens := 0 } RLInput.idle = ({ tokens := 1 }, false)

rflAll goals completed! 🐙

end Tests

Testing Requests

example : step testParams { tokens := 0 } .request =

({ tokens := 0 }, true) := by⊢ step testParams { tokens := 0 } RLInput.request = ({ tokens := 0 }, true)

rflAll goals completed! 🐙

example : step testParams { tokens := 3 } .request =

({ tokens := 2 }, true) := by⊢ step testParams { tokens := 3 } RLInput.request = ({ tokens := 2 }, true)

rflAll goals completed! 🐙

example : step { capacity := 0, refill := 0 } { tokens := 0 } .request =

({ tokens := 0 }, false) := by⊢ step { capacity := 0, refill := 0 } { tokens := 0 } RLInput.request = ({ tokens := 0 }, false)

rflAll goals completed! 🐙

-

Empty bucket, normal refill:

0refills to1, the request consumes it, and the result istrue. -

Full bucket:

3 + 1is capped at capacity3, then one token is consumed. -

Zero capacity: refill cannot create any token, so the request is rejected with

false.

Invariants

-

An invariant is a fact that should remain true as the system runs.

-

For this rate limiter: the number of tokens should never exceed capacity.

-

In Lean, invariants are written directly in code, in the same file as the implementation.

-

Propmeans this is a logical proposition, not a runtime Boolean.

-- Safety invariant: tokens never exceed capacity

def Invariant (p : RLParams) (s : RLState) : Prop :=

s.tokens ≤ p.capacity

Specifications

-

A specification says what the implementation is supposed to mean.

-

Lean lets us express the spec as executable-adjacent code, using the same data types.

-

Later, we can prove that

stepmatches this specification for every input state.

Invariants and Specifications for the Rate Limiter

-

What facts should remain true no matter how many steps the limiter takes?

-

Can the token count ever be negative? Can it ever exceed

capacity? -

When should a

.requestbe granted? -

What should happen when the bucket is empty after refill?

-

Which claims are safety invariants, and which claims describe functional correctness?

canGrant

-

canGrantcaptures the condition under which a request should succeed.

-- Spec: when should a request be granted?

def canGrant (p : RLParams) (s : RLState) : Prop :=

0 < min p.capacity (s.tokens + p.refill)

Invariant Preservation

theorem step_preserves_inv

(p : RLParams) (s : RLState) (i : RLInput) :

Invariant p s → Invariant p (step p s i).1

-

For any parameters

p, current states, and inputi: -

If the invariant is true before one step,

-

then the invariant is true after running

step. -

(step p s i).1means the next state, ignoring the Boolean output.

Invariant Preservation

-- Invariant is preserved by one step

theorem step_preserves_inv

(p : RLParams) (s : RLState) (i : RLInput) :

Invariant p s → Invariant p (step p s i).1 := byp:RLParamss:RLStatei:RLInput⊢ Invariant p s → Invariant p (step p s i).fst

intro hp:RLParamss:RLStatei:RLInputh:Invariant p s⊢ Invariant p (step p s i).fst

unfold step Invariantp:RLParamss:RLStatei:RLInputh:Invariant p s⊢ (match i with

| RLInput.idle =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

({ tokens := tokens₁ }, false)

| RLInput.request =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

if _h : 0 < tokens₁ then ({ tokens := tokens₁ - 1 }, true) else ({ tokens := tokens₁ }, false)).fst.tokens ≤

p.capacity

grindAll goals completed! 🐙

-

intro hassumes the invariant holds in the starting state. -

unfoldexpands the definitions ofstepandInvariant. -

grindfinishes the arithmetic and case analysis automatically.

Request Correctness Statement

theorem request_granted_iff

(p : RLParams) (s : RLState) :

(step p s .request).2 = true ↔

0 < min p.capacity (s.tokens + p.refill)

-

This theorem connects the implementation to the specification.

-

The left side is what the implementation returns for a request.

-

The right side is the mathematical condition for granting the request.

-

↔means "if and only if": both directions must be proved.

Request Correctness Proof

-- Functional correctness: implementation matches spec

theorem request_granted_iff

(p : RLParams) (s : RLState) :

(step p s .request).2 = true ↔

0 < min p.capacity (s.tokens + p.refill) := byp:RLParamss:RLState⊢ (step p s RLInput.request).snd = true ↔ 0 < min p.capacity (s.tokens + p.refill)

unfold stepp:RLParamss:RLState⊢ (match RLInput.request with

| RLInput.idle =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

({ tokens := tokens₁ }, false)

| RLInput.request =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

if _h : 0 < tokens₁ then ({ tokens := tokens₁ - 1 }, true) else ({ tokens := tokens₁ }, false)).snd =

true ↔

0 < min p.capacity (s.tokens + p.refill)

grindAll goals completed! 🐙

-

unfold stepexposes the refill and request-handling logic. -

grindsplits the relevant cases and proves both directions of the↔.

Implementation vs. Specification

-

The implementation computes a concrete answer:

(step p s .request).2. -

The specification states the condition we mean:

0 < min p.capacity (s.tokens + p.refill). -

To connect them, we need to rule out two kinds of mismatch.

-

If the implementation returns

true, the spec condition should hold. -

If the spec condition holds, the implementation should return

true.

Soundness

-

Soundness is a one-way guarantee: if the implementation returns

true, then the specification condition holds. -

It rules out false positives: returning

truewhen the spec says the request should not be granted. -

It does not, by itself, say the implementation returns

trueevery time the spec condition holds. -

The other direction is completeness: if the spec condition holds, then the implementation returns

true.

Request Soundness

-- soundness

theorem request_sound

(p : RLParams) (s : RLState) :

(step p s .request).2 = true →

0 < min p.capacity (s.tokens + p.refill) := byp:RLParamss:RLState⊢ (step p s RLInput.request).snd = true → 0 < min p.capacity (s.tokens + p.refill)

unfold stepp:RLParamss:RLState⊢ (match RLInput.request with

| RLInput.idle =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

({ tokens := tokens₁ }, false)

| RLInput.request =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

if _h : 0 < tokens₁ then ({ tokens := tokens₁ - 1 }, true) else ({ tokens := tokens₁ }, false)).snd =

true →

0 < min p.capacity (s.tokens + p.refill)

grindAll goals completed! 🐙

-

If

stepgrants the request, then a token was available after refill. -

The theorem proves the limiter never grants a request without satisfying the spec condition.

-

This is the left-to-right direction of

request_granted_iff.

Request Completeness

-- completeness

theorem request_complete

(p : RLParams) (s : RLState) :

0 < min p.capacity (s.tokens + p.refill) →

(step p s .request).2 = true := byp:RLParamss:RLState⊢ 0 < min p.capacity (s.tokens + p.refill) → (step p s RLInput.request).snd = true

unfold stepp:RLParamss:RLState⊢ 0 < min p.capacity (s.tokens + p.refill) →

(match RLInput.request with

| RLInput.idle =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

({ tokens := tokens₁ }, false)

| RLInput.request =>

have tokens₁ := min p.capacity (s.tokens + p.refill);

if _h : 0 < tokens₁ then ({ tokens := tokens₁ - 1 }, true) else ({ tokens := tokens₁ }, false)).snd =

true

grindAll goals completed! 🐙

-

If the spec condition holds, then

stepgrants the request. -

The theorem proves the limiter does not reject a request when a token is available after refill.

-

This is the right-to-left direction of

request_granted_iff.

Putting Both Directions Together

-

Soundness rules out granting a request when the spec condition is false.

-

Completeness rules out rejecting a request when the spec condition is true.

-

Together, they say the implementation's Boolean result exactly matches the specification.

-

In Lean, the combined statement is the

↔theorem:

theorem request_granted_iff

(p : RLParams) (s : RLState) :

(step p s .request).2 = true ↔

0 < min p.capacity (s.tokens + p.refill)

Proving the Combined Statement

example (p : RLParams) (s : RLState) :

(step p s .request).2 = true ↔

0 < min p.capacity (s.tokens + p.refill) := byp:RLParamss:RLState⊢ (step p s RLInput.request).snd = true ↔ 0 < min p.capacity (s.tokens + p.refill)

constructorp:RLParamss:RLState⊢ (step p s RLInput.request).snd = true → 0 < min p.capacity (s.tokens + p.refill)p:RLParamss:RLState⊢ 0 < min p.capacity (s.tokens + p.refill) → (step p s RLInput.request).snd = true

·p:RLParamss:RLState⊢ (step p s RLInput.request).snd = true → 0 < min p.capacity (s.tokens + p.refill) exact request_sound p sAll goals completed! 🐙

·p:RLParamss:RLState⊢ 0 < min p.capacity (s.tokens + p.refill) → (step p s RLInput.request).snd = true exact request_complete p sAll goals completed! 🐙

-

constructorsplits an↔goal into its two directions. -

The first direction is exactly

request_sound p s. -

The second direction is exactly

request_complete p s.

From One Step to Many Steps

-

So far, every theorem has been about one call to

step. -

That proves the behavior of a single transition: one state, one input, one output.

-

Real systems run for many transitions.

-

To model a run of the limiter, we feed it a list of inputs and thread the state through each step.

-

The result should include the final state and the sequence of grant/reject decisions.

Running the Rate Limiter

-- Run the state machine across a list of inputs.

-- Returns the final state plus the list of outputs.

def run (p : RLParams) : RLState → List RLInput → RLState × List Bool

| s, [] => (s, [])

| s, i :: is =>

let (s', out) := step p s i

let (s'', outs) := run p s' is

(s'', out :: outs)

-

The empty input list leaves the state unchanged and produces no outputs.

-

For

i :: is, we run onestep, then recursively run the remaining inputs. -

out :: outskeeps the Boolean decisions in the same order as the inputs.

Final State

-- Sometimes we only care about the final state.

def finalState (p : RLParams) (s : RLState) (inputs : List RLInput) : RLState :=

(run p s inputs).1

-

runreturns a pair: the final state and the list of Boolean outputs. -

(run p s inputs).1selects the first component of that pair. -

This helper lets later theorems talk only about the state after a whole run.

-

The output history is still available from

runwhen we need it.

Invariant Across a Run

theorem run_preserves_inv

(p : RLParams) (s : RLState) (inputs : List RLInput) :

Invariant p s → Invariant p (finalState p s inputs)

-

This lifts the one-step invariant theorem to any list of inputs.

-

If the initial state satisfies the invariant,

-

then the final state after running all inputs also satisfies the invariant.

-

The proof follows the structure of the input list.

Proving the Run Invariant

-- The invariant is preserved across any run.

theorem run_preserves_inv

(p : RLParams) (s : RLState) (inputs : List RLInput) :

Invariant p s → Invariant p (finalState p s inputs) := byp:RLParamss:RLStateinputs:List RLInput⊢ Invariant p s → Invariant p (finalState p s inputs)

intro hsp:RLParamss:RLStateinputs:List RLInpuths:Invariant p s⊢ Invariant p (finalState p s inputs)

induction inputs generalizing s with

| nil =>p:RLParamss:RLStatehs:Invariant p s⊢ Invariant p (finalState p s [])

simp [finalState, run]p:RLParamss:RLStatehs:Invariant p s⊢ Invariant p s

exact hsAll goals completed! 🐙

| cons i is ih =>p:RLParamsi:RLInputis:List RLInputih:∀ (s : RLState), Invariant p s → Invariant p (finalState p s is)s:RLStatehs:Invariant p s⊢ Invariant p (finalState p s (i :: is))

simp [finalState, run]p:RLParamsi:RLInputis:List RLInputih:∀ (s : RLState), Invariant p s → Invariant p (finalState p s is)s:RLStatehs:Invariant p s⊢ Invariant p (run p (step p s i).fst is).fst

apply ihp:RLParamsi:RLInputis:List RLInputih:∀ (s : RLState), Invariant p s → Invariant p (finalState p s is)s:RLStatehs:Invariant p s⊢ Invariant p (step p s i).fst

exact step_preserves_inv p s i hsAll goals completed! 🐙

-

intro hsassumes the invariant holds in the starting state. -

The empty-list case reduces to the same state, so

hsproves it. -

The nonempty case uses

step_preserves_invfor the first input, then the induction hypothesis for the remaining inputs.

Example: Liskov Substitution

-

Liskov substitution is about contracts.

-

If code expects a rectangle-like thing, it may assume width and height can be changed independently.

-

In Lean, we can write that expectation directly as a contract.

-- A rectangle-like API promises width and height can be set independently.

structure RectAPI where

Shape : Type

width : Shape → Nat

height : Shape → Nat

resize : Shape → Nat → Nat → Shape

resize_area :

∀ s w h, width (resize s w h) * height (resize s w h) = w * h

Contract vs. Representation

-

RectAPIis the contract. -

It says what code using a rectangle-like thing is allowed to expect.

-

There is some shape type, some way to read width and height, and some way to resize.

-

The proof field

resize_areasays resizing towandhreally gives areaw * h.

Contract vs. Representation

-

Rectis the concrete representation. -

It says what one particular rectangle value stores.

-

Here, a rectangle stores two independent numbers:

widthandheight. -

rectAPI : RectAPIconnects the two: -

it packages

Recttogether with operations and a proof that those operations satisfy the contract.

A Rectangle Satisfies the Contract

structure Rect where

width : Nat

height : Nat

def rectAPI : RectAPI where

Shape := Rect

width r := r.width

height r := r.height

resize _ w h := { width := w, height := h }

resize_area := by⊢ ∀ (s : Rect) (w h : Nat), { width := w, height := h }.width * { width := w, height := h }.height = w * h

intro s ws:Rectw:Nat⊢ ∀ (h : Nat), { width := w, height := h }.width * { width := w, height := h }.height = w * h hs:Rectw:Nath:Nat⊢ { width := w, height := h }.width * { width := w, height := h }.height = w * h

rflAll goals completed! 🐙

-

After

resize r w h, the area is exactlyw * h. -

That is the behavior code using

RectAPIis allowed to rely on.

A Square Does Not

structure Square where

side : Nat

def squareResize (_ : Square) (w _h : Nat) : Square :=

{ side := w }

theorem square_not_rect :

¬ (∀ s w h,

(squareResize s w h).side * (squareResize s w h).side = w * h) := by⊢ ¬∀ (s : Square) (w h : Nat), (squareResize s w h).side * (squareResize s w h).side = w * h

intro contractcontract:∀ (s : Square) (w h : Nat), (squareResize s w h).side * (squareResize s w h).side = w * h⊢ False

have bad := contract { side := 1 } 2 3contract:∀ (s : Square) (w h : Nat), (squareResize s w h).side * (squareResize s w h).side = w * hbad:(squareResize { side := 1 } 2 3).side * (squareResize { side := 1 } 2 3).side = 2 * 3⊢ False

simp [squareResize] at badAll goals completed! 🐙

-

The square has only one side length, so it cannot independently remember

wandh. -

The theorem asks whether

squareResizecan satisfy the rectangle contract for everywandh. -

Lean proves the mismatch using the concrete case

w = 2,h = 3: the square area is4, but the rectangle contract requires6.

Liskov Takeaway

-

Mathematically, a square is a rectangle.

-

But a mutable square is not a safe substitute for a mutable rectangle.

-

Subtypes should preserve the behavior their clients are allowed to rely on.

Concluding Thoughts

The Trend: AI Writes the Formal Math

-

The workflow is moving from AI as a helper to AI as a proof-writing partner.

-

AI systems increasingly generate candidate theorems, supporting lemmas, and Lean proofs.

-

Lean gives the feedback loop: models can propose, test, repair, and retry against the kernel.

-

Humans still set the goals and judge meaning; the machine can handle more of the formalization and proof search.

Success Stories: AI

To date, every AI system that solved IMO problems with formal proofs used Lean.

-

AlphaProof (Google DeepMind) — silver medal, IMO 2024

-

Aristotle (Harmonic) — gold medal, IMO 2025

-

SEED Prover (ByteDance) — silver medal, IMO 2025

Three independent teams, all used Lean.

AI Scoreboard

-

Axiom: 12/12 on Putnam 2025.

-

DeepSeek Prover-V2: 88.9% on MiniF2F-test. Open-source, 671B parameters.

-

Harmonic: Built the Aristotle AI — gold medal, IMO 2025

None of these systems existed in early 2024.

The Kernel as AI Safety Infrastructure

"It's really important with these formal proof assistants that there are no backdoors or exploits you can use to somehow get your certified proof without actually proving it, because reinforcement learning is just so good at finding these backdoors." — Terence Tao

The kernel's soundness is not just a theoretical property. It is AI safety infrastructure.

RL agents will find every shortcut. The kernel is the one thing they can't fake.

Who Watches the Provers? | Comparator | Validating a Lean Proof

Another Example: Radix - 10 AI Agents, One Weekend

10 AI agents built a verified embedded DSL with proved optimizations in a single weekend.

-

Zero

sorry— 52 theorems, all complete -

5 verified compiler optimizations

-

Determinism, type safety, memory safety — all proved

-

Interpreter correctness — sound and complete

-

27 modules, ~7,400 lines of Lean

-

Full agent autonomy - no human wrote any of the code or proofs.

Where to Go Next

-

de Moura's talk: I used some of his slides, but his talk also has a large example of a verified DSL.

-

Full Radix: github.com/leodemoura/RadixExperiment

-

Install Lean: lean-lang.org

-

Learn: Functional Programming in Lean, Theorem Proving in Lean 4, Lean Reference Manual

-

Community: Lean Zulip

-

Lean FRO: lean-lang.org/fro/

Other Resources